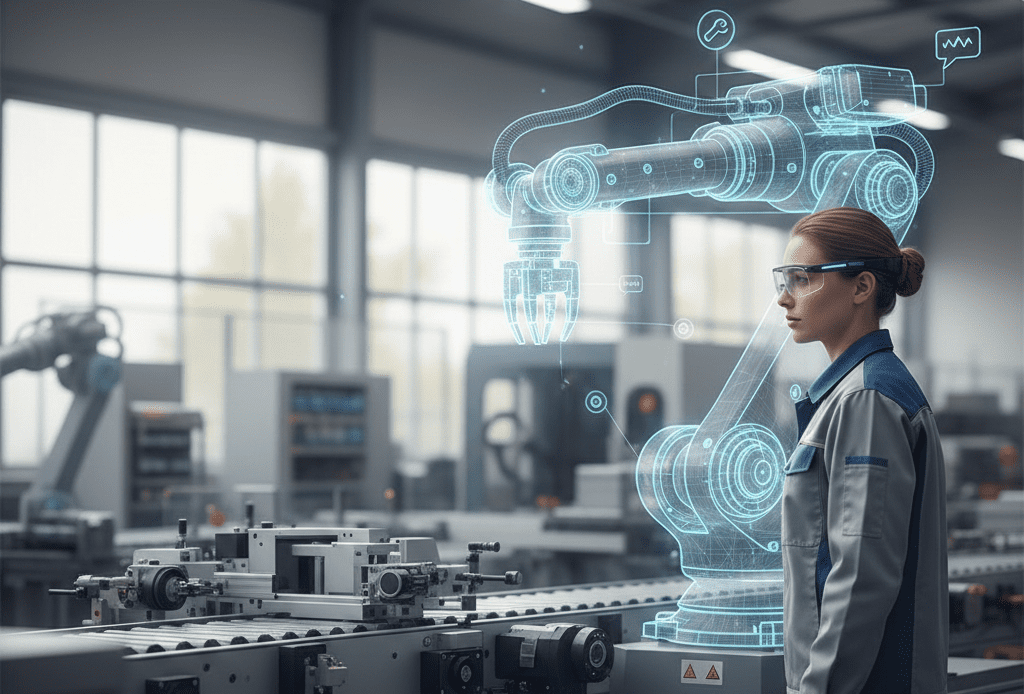

A machine stops mid-shift. The operator hears a familiar tone fault again. But this time, instead of hunting through manuals or waiting for a specialist, they look at the machine through smart glasses. The relevant panel is highlighted. A few sensor trends appear where they matter, not on a distant dashboard. A conversational assistant asks a clarifying question, proposes two safe intervention paths, and explains the trade-off. A remote expert joins the same view, drops a marker on a specific bolt, and the digital twin in the background runs a quick “what-if” check before the first tool is even picked up.

That is the feel of manufacturing XR in 2026: less “wow demo,” more quiet competence and more human-centric, consistent with the Industry 5.0 push toward tighter collaboration between people and intelligent systems [1].

It’s also the direction behind XR5.0, our Innovation Action project, financed by the Horizon Europe Program, building a person-centric, trustworthy, AI-powered XR ecosystem for manufacturing where human-centric digital twins, explainable AI, and generative AI tailor XR experiences to real workers, real contexts, and European values (privacy, security, data sovereignty).

What’s changing isn’t XR alone, but how three layers are starting to lock together: XR as a spatial interface [2], digital twins as living models of assets and processes [3], and generative AI as the “translation layer” that turns data and procedures into guidance people can act on.

XR is becoming the factory’s user interface

Manufacturing has used XR for years training, assembly guidance, inspection, remote support because it reduces errors, improves safety, and speeds up learning [4]. What’s new is how operational it’s becoming: XR is moving from pilots into daily routines, while digital twin simulations are increasingly used for planning, optimization, and proactive decisions [3].

As this shift happens, expectations rise. Overlays alone aren’t enough. Workers want systems that adapt to shop-floor reality noise, gloves, time pressure, mixed skill levels, and constant change. That is where AI especially generative AI begins to change the experience.

What generative AI adds (beyond automation)

In 2026, the value of generative AI in XR is less about futuristic autonomy and more about three practical upgrades.

1) Procedures become situational guidance. Maintenance and repair are classic AR territory: diagrams, sequences, and in-context instructions to reduce guesswork and speed fixes [5]. The limitation is that procedures are usually written for an “average” scenario. Generative AI can make XR guidance more conversational and responsive: summarize what matters now, adapt steps to the observed situation, and answer follow-up questions hands-free.

2) Training feels less like content and more like coaching. XR training can reduce training time, improve retention, and allow safe practice without risking downtime or injury [4][5]. But scaling it has often meant scaling content production slow and costly. Market signals increasingly point to AI as the way through this bottleneck: adaptive learning paths, real-time adjustment of difficulty, and faster creation/maintenance of training materials are becoming requirements for broad rollout [6]. Generative AI doesn’t replace expertise, but it can compress the loop from expert know-how to usable training especially when product variants and procedures change frequently.

3) Digital twins start to speak “human.” Digital twins can be powerful yet hard to interrogate. XR already helps people “step into” complex twin data spatially [3]. Add generative AI, and the twin becomes easier to question: What changed since yesterday? What’s the likely cause? What’s the safest intervention? with answers grounded in telemetry and history, not guesswork. This is where the “industrial metaverse” idea becomes tangible: real-time interaction with physical entities mirrored into a virtual space, enabling proactive problem-solving rather than reactive firefighting [1].

What’s working on factory floors in 2026

The most convincing progress isn’t one killer app it’s repeatable patterns.

- AR inspection and maintenance toolkits increasingly blend live data with contextual overlays and knowledge modules [7].

- Collaborative maintenance connects field technicians with control-room colleagues or remote experts, reducing risk and speeding resolution [7].

- XR-based design reviews and virtual commissioning reduce late-stage errors and accelerate iteration, especially where physical prototyping is expensive or disruptive [8].

Across all three, the goal is the same: compress the distance between knowing and doing.

The invisible infrastructure behind “it just works”

When AI-powered XR works well, the infrastructure disappears but it’s doing heavy lifting:

- 5G and edge computing help erase latency barriers for real-time, multi-user collaboration and on-floor visualization [6].

- Research partnerships are explicitly exploring XR + digital twins + AI over 5G/5G Advanced for immersive training and real-time process optimization across sites [9].

- For high-fidelity twins, hybrid approaches (local + streamed rendering) are positioned as a way to scale realism without putting all compute on the device [10].

- And open standards such as OpenXR matter for interoperability and ecosystem maturity [6].

The hard part: trust, privacy, and human reality

Industry 5.0 is not just about capability, it’s about trust and human-centricity [1]. XR makes this unavoidable because headsets and smart glasses can capture sensitive environments and worker-related signals. Security and privacy concerns are real: XR devices can “see,” and organizations need risk assessment, policies, and careful data management especially where IP or personal data could be exposed [11]. Adoption also depends on human factors; comfort and all-day wearability remain practical constraints for some users [6]. The next wave won’t be won by the flashiest demo, but by solutions workers accept, supervisors trust, and IT can secure.

Where XR5.0 fits

Many deployments still assume a generic user and fixed workflows. XR5.0 starts from the opposite assumption: people differ, contexts change, and XR must adapt safely and transparently. That means human-centric digital twins for real-time adaptation, generative AI inside XR (including a chat engine for context-aware assistance and maintenance), explainable AI for transparent human–AI collaboration, and a strong commitment to privacy, security, compliance, and data sovereignty. It is being validated through six real manufacturing pilots where XR has proven ROI and where generative AI can remove scaling bottlenecks.

Closing thought. AI-powered XR is starting to feel less like “technology added to manufacturing” and more like manufacturing learning a new language: spatial, interactive, and collaborative. The best versions won’t replace people. They’ll raise the floor safer work, faster learning, and more first-time-right execution. That’s the promise of Industry 5.0 [1], and the trajectory XR5.0 is building toward.

References

[2] What is XR, and how is it radically transforming industries?

[3] Business Reporter — Digital twins, extended reality and manufacturing’s quiet revolution.

[4] How VR Technology Is Altering Manufacturing.

[5] XR/AR in Manufacturing: 7 Use Cases with Examples (’26).

[6] Extended Reality (XR) Market Size, Trends & Share Analysis, 2026–2031.

[7] Unity — How XR Reinvents the Manufacturing Factory Floor.

[8] ABI Research — Three Ways XR Revolutionizes Industrial Machinery Manufacturing.

[10] Immersive Learning News — NVIDIA’s New Superpowers: Digital Twins & AI-powered XR, partnership with Apple and Magic Leap. [11] VIVE Blog — XR in Manufacturing: Considerations from Industry Professionals.